Quantification of Autism Symptoms using Speech Signal Processin

Autism Spectrum Disorder (ASD) is a neurodevelopmental disorder that involves difficulties in social communication. Some of these social communication problems are apparent in the way children with ASD communicate by speaking, crying, and shouting. Previous studies have shown, for example, that children with ASD speak with more variable pitch, repeat phrases for no apparent reason (i.e., echolalia), and cry and shout in strange ways. Developing speech processing algorithms that can detect and quantify these and other features of speech that are indicative of ASD are likely to have great clinical value. Such tools are likely to be helpful in assessing early ASD risk, estimating the initial severity of social problems in individual children, and evaluating their improvement or deterioration over time.

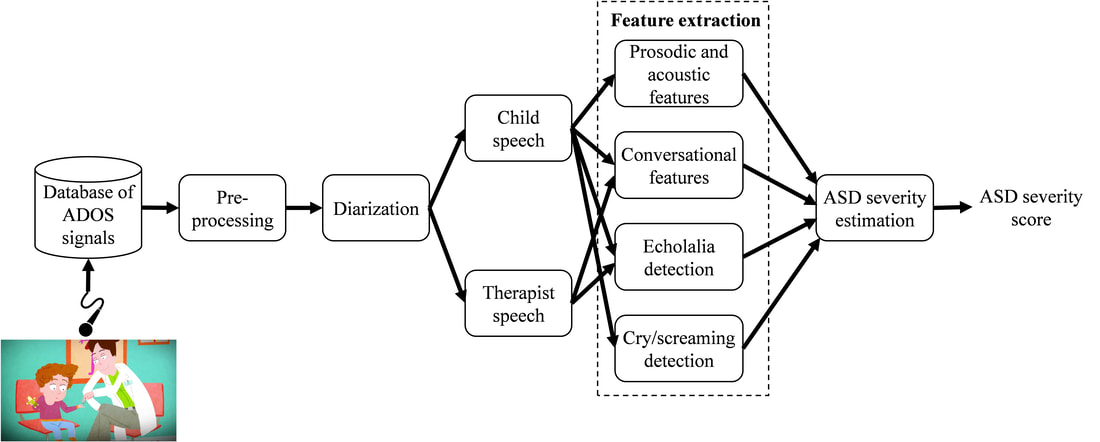

In this research we aim to develop and evaluate speech analysis tools for quantifying autistic speech characteristics in 2-6-year-old Hebrew speaking children. The recordings of children who participated in an Autism Diagnostic Observation Schedule (ADOS) assessment are used.

In the first project in this research we extracted 60 prosodic, acoustic, and conversational features from speech recordings of 72 Hebrew speaking children, who were recorded during ADOS assessments. Twenty-one of these features were significantly correlated with the children's ADOS scores (Eni et al., 2020), which represent the severity of ASD symptoms as estimated by the clinician. Using these features, we tested the ability of several DNN algorithms to estimate ADOS scores and compared their performance with Linear Regression and Support Vector Regression (SVR) models. We found that a Convolutional Neural Network (CNN) yielded the best results. This algorithm predicted ADOS scores with a mean RMSE of 4.65 and a mean correlation of 0.72 with the true ADOS scores when trained and tested on different subsamples of the available data.

The future project will focus on echolalia (repetitive speech) detection, analysis of crying and screaming, and creating a full combined system for autism severity estimation. We plan to use Deep Neural Networks (DNNs) to identify and quantify these abnormalities in children who are referred to ASD diagnosis. Recordings of children without ASD who undergo the same evaluation will be used as a comparison group.

In this research we aim to develop and evaluate speech analysis tools for quantifying autistic speech characteristics in 2-6-year-old Hebrew speaking children. The recordings of children who participated in an Autism Diagnostic Observation Schedule (ADOS) assessment are used.

In the first project in this research we extracted 60 prosodic, acoustic, and conversational features from speech recordings of 72 Hebrew speaking children, who were recorded during ADOS assessments. Twenty-one of these features were significantly correlated with the children's ADOS scores (Eni et al., 2020), which represent the severity of ASD symptoms as estimated by the clinician. Using these features, we tested the ability of several DNN algorithms to estimate ADOS scores and compared their performance with Linear Regression and Support Vector Regression (SVR) models. We found that a Convolutional Neural Network (CNN) yielded the best results. This algorithm predicted ADOS scores with a mean RMSE of 4.65 and a mean correlation of 0.72 with the true ADOS scores when trained and tested on different subsamples of the available data.

The future project will focus on echolalia (repetitive speech) detection, analysis of crying and screaming, and creating a full combined system for autism severity estimation. We plan to use Deep Neural Networks (DNNs) to identify and quantify these abnormalities in children who are referred to ASD diagnosis. Recordings of children without ASD who undergo the same evaluation will be used as a comparison group.